The AI Revolution: Intercom’s Big Bet

And why building custom models might be the key to staying ahead

Intro

Ambition is the ultimate leveller in business. It shapes industries by raising the bar, forcing competitors to step up or succumb to stasis.

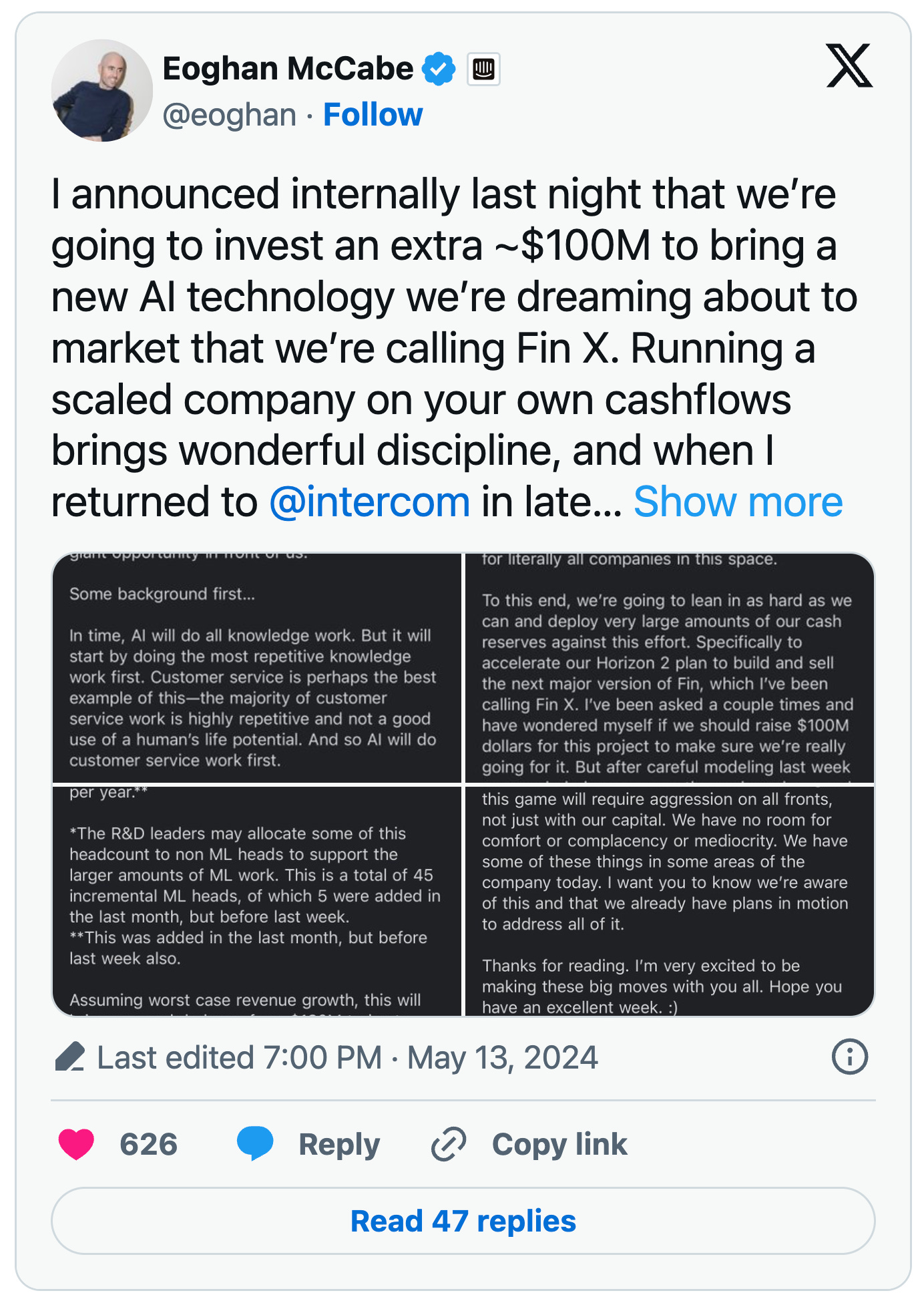

Last week the CEO of Intercom, Eoghan McCabe, announced that they’d be spending $100m to bring new AI technology to the market called Fin X. This investment is going to be funded through their own cashflows. It’s a bold and inspirational move by an Irish company that has never shied away from making big bets on the global stage.

The customer service market is like the canary in the coal mine. It’s a strong indicator of how AI will transform most industries. It’s clear that autonomous agents will replace the majority of human-led support. So the incumbents in the space are all thinking about the initiatives they need to take today, in order to stay relevant tomorrow. But while other teams are still strategising, the Intercom executive team just went all in and bet the house on AI. The bar has been set.

If you squint hard enough, we think you’ll see the signs of a company strategy that is going to play out across every major software category. Intercom's recent announcement could signal the beginning of a significant change in terms of model development. In the age of artificial intelligence, the decision to build or buy has become even more important.

Model MVP

If AI represents the next evolution in computing, each model can be seen as a probabilistic thinking machine. Its ability to unlock intelligence depends on how it was developed and the specific tasks it is designed to perform. In the same way that you need to choose a computer to fit your needs, companies need to select a model that can maximise business value for their specific domain.

For customer service, the ideal model would enable an AI agent to emulate human<>customer support interactions. The agent would demonstrate high empathy. It would retrieve the right context before making suggestions. It would never hallucinate or make stuff up. And it would respond in a fraction of the time that it takes humans to answer support queries.

At the risk of oversimplifying, that’s effectively what Intercom have developed already. They had previously built out their own ML solutions in-house. When ChatGPT launched in November 2022, they started working closely with OpenAI. They’ve had access to early APIs and built an AI product portfolio on top of OpenAI’s base model (in addition to leveraging their internal ML expertise).

Using the best third-party models to bring Fin to market was a great decision. There was an overhang in tech capabilities and developing an LLM from scratch would have been too slow and too expensive. By partnering with OpenAI, Intercom has been able to ship AI-native features before their competitors. As a result, they’ve carved out a position as the AI leader in the space.

A General Purpose Tax

It’s fair to assume that a lot of the funding for Fin X will go towards developing custom models tailored for Intercom's needs. Hiring over 50 ML Engineers will support this goal, indicating that Intercom has decided to build rather than continue buying. A model that takes you from 0 to 1 might not be the best option when scaling from 1 to N.

Given that chat support is mostly textual data, it might make sense for Intercom to fork their own models from an existing open-source LLM like Meta Llama 3. This approach would allow them to start with a robust foundational model. Meta reportedly invested over $100 million in developing Llama 3, training it on 24,000 GPUs, each costing around $40,000.

It’s economical to leverage existing training cycles on publicly available information including sources like company wikis, help desk examples and support articles. This training data also supports emergent behaviours like reasoning and language capabilities which are critical to workflows like solving support tickets.

But these large ‘God’ models also contain training data that’s not relevant for Intercom’s needs. Do they care if the same model is also 10x better at diagnosing x-ray images? While OpenAI’s models will continue to improve in a general way, businesses using OpenAI APIs are essentially paying for enhancements in the entire neural network's latent space (eg the multi-dimensional space where data is represented in the model). This leads to a general-purpose tax. You’re ultimately paying for compute and training you might never use.

By developing their own models, Intercom can optimise for their domain without bearing the cost of unnecessary improvements. It’s also becoming apparent that the latest gains in GPT-4 and GPT-4o are mostly coming from post-training, not pre-training. Post-training includes techniques like reinforcement learning from human feedback (RLHF), fine-tuning and quantization.

If Intercom wants to do additional fine-tuning on top of OpenAI’s model, they would need to pay a premium above the base cost to use that custom model. By deploying their own models, they can run domain specific post-training for as long as they need to. This should lead to cheaper, more performant models over time.

Unit Economics

Another key factor to consider is the unit economics of servicing each AI-led support ticket. Proprietary LLMs have a billing system which is based on the volume of input and output tokens used over a given period. For example, GPT-4 Turbo charges $10 per million input tokens and $30 per million output tokens (GPT-4o offers a 50% discount on these rates).

Token costs can become significant in an AI use cases like customer service — the costs being split between the volume of support tickets and the length of the support conversations.

To better understand the cost breakdown, it’s important to note that the current suite of LLMs are stateless (eg they can’t remember previous interactions). As a result, the chat history must be repeated every time the AI messages a human in any given thread.

This means that billing per token applies to the entire history of the growing conversation for each interaction (including the original system prompt). This leads to quadratic costs as the conversation lengthens. It’s not a linear relationship.

So how does Intercom pass this cost through to its customers? Fin AI Agent is priced at $0.99 per resolution. It’s a clever business model which aligns incentives and ensures that their AI is priced on a value basis. According to Intercom’s support article:

You only pay when Fin does what you care about most – resolving a customer’s question. A resolution is counted when, following the last AI Answer or Custom Answer in a conversation, the customer confirms the answer provided is satisfactory or exits the conversation without requesting further assistance.

Taking into account the average length of a support chat in tokens, and quadratic billing, we’d estimate that it costs Intercom between $0.10 and $0.50 to service each conversation using GPT-4 Turbo. With a 50% resolution rate by Fin, Intercom is likely making a small margin on each automated interaction. However, for the other 50% of cases where there isn't a successful resolution, the OpenAI cost cannot be passed through to the end customer. In these instances, Intercom has to cover the API usage costs themselves.

Although base costs for token usage are expected to decrease and context windows will expand, we believe this factor is still under-appreciated. Many Boards are likely just starting to grasp the unit economics of AI based chat products.

Platform Risk

Another key consideration is the platform risk associated with building on top of a proprietary model. There is a history of products becoming overly dependent on third-party APIs, only to suffer when those platforms change or discontinue their services (e.g., Facebook Apps, Twitter's API).

It might be unfair to compare providers like OpenAI, Google, and Anthropic in the same way, as their business models depend on consumer/business usage of their models. Yet, we've seen how updates to an underlying model can change the performance of any product built on top of it. This has led to the emergence of a new category of LLM evaluation and deployment tools designed to monitor LLM response quality. Examples include Context.ai, Agenta.ai, Logspend and Opper.

Researchers have shown that degradation in OpenAI’s model response quality can occur in certain contexts. This is a constant ebb and flow; some updates will improve specific use cases, while others may worsen. The model might improve generally but underperform in specific scenarios. As a developer, this is another form of the general-purpose tax you pay.

With the black-box nature of neural networks, it's also not always clear what’s changed. You can see when certain evaluators are failing, but that doesn’t mean you know why they’re underperforming or how to fix it. This can lead to a constant game of cat and mouse to monitor performance and adapt to the latest model. Due to the stochastic nature of LLMs, this is not a trivial problem. A small deviation in the underlying model can lead to a significant change at the application layer.

If you build your own models, you gain control over the end-to-end release cycle. This makes product development more consistent and reduces the risk of your LLM response quality regressing. You’ll also develop the skillsets required in-house to monitor and improve model performance. Outsourcing this expertise to a provider is another form of platform risk.

Elastic Demand

AI makes intelligence abundant for the first time in history. When applied to the customer service space, you can think of it in two phases — before and after the introduction of AI agents.

Before AI agents, customer support had a natural scarcity. You could raise unlimited support tickets, but the number of human agents was limited, so you expected to wait for responses.

After the introduction of AI agents, both demand and supply are uncapped. Customers can raise unlimited support tickets and get real-time answers from AI agents. This efficiency boost will lead to increased usage. Intercom expects Fin to handle over 70% of all support cases within a couple of years.

For instance, if you're unsure how a feature works on a new software tool, you might have previously checked the support docs yourself. Now, you'll likely ask the AI agent to handle it for you. Ramp just shipped an AI-native feature which does exactly this — it can show you how to do anything on the platform from a simple prompt. So the overall number of agent interactions will grow and the cost of servicing each business is also going to increase in lock-step.

This phenomenon of elastic demand is know as Jevons Paradox. Historically, increased efficiency often leads to more consumption. For example, more efficient coal extraction led to increased coal consumption and better motorways resulted in more traffic. Marc Andreessen and Ben Horowitz discussed this paradox on a recent podcast.

Elastic demand will affect every vertical that AI touches, leading to both increased usage by customers and increased costs for servicing those customers. Consumers and businesses will expect more from software, making cost control crucial to meet growing demand.

Build or Buy

Ultimately, the decision to buy or build your own model will depend on each company’s circumstances, leading to a market bifurcation.

For most technology businesses, where AI augments their core value proposition, paying for access to the best models is the rational choice. Smaller companies and startups will also benefit from existing providers, shifting to a 'build it' mode if unit economics become a concern as they grow.

However, for companies like Intercom, where AI is central to their value proposition, building and maintaining their own models might be key to staying ahead of the competition. Databricks demonstrated this recently with the release of their open-source model DBRX.

Whether closed or open-source, we expect more AI native companies to develop their own models to solve some of the problems identified above:

They can specialise and avoid paying a general-purpose tax, tailoring their models to power the agentic workflows that they’re selling.

They can better manage costs and achieve margins closer to traditional SaaS COGS of 80%, while demonstrating significant efficiency gains.

They can reduce platform risk at the application layer, providing a more consistent way to build on top of the models that they operate.

They can develop the skills in-house to manage their own models, nurturing talent for roles like ML Engineers, LLMOps and Research Scientists.

They can prepare for increased software usage and build in scale advantages that compound over time, carving out additional defensibility from competitors.

Conclusion

We’re still very early in the AI adoption curve, with potentially twenty years of improvements ahead, similar to the Internet from the early 2000s onwards. Like that era, the first few years will define the winners and losers in each category.

As Eoghan McCabe mentioned on a recent podcast with Kleiner Perkins:

Every single software company that sells seats for people to do work is about to be disrupted….. AI is going to do the work that these people used to do. So every company like Intercom, Salesforce, ServiceNow needs to become an AI company.

Intercom is targeting a $350B market opportunity. Investing $100M to secure a defensible lead is a great bet. We expect similar announcements from other verticals soon. Kudos to the Intercom team for leading the charge and demonstrating the ambition needed to thrive in this new paradigm. It's a great time to be building technology.

As always, the biggest risk is doing nothing and getting left behind.

Sources:

https://research.contrary.com/reports/intercom

https://openai.com/api/pricing/

https://www.intercom.com/pricing

https://www.intercom.com/fin/demo/preview?token=b0c04693-ebe5-4a96-b035-f77de13bbdb4

https://community.intercom.com/fin-ai-agent-59/fin-resolution-rate-4784

https://www.forbes.com/sites/stevemcdowell/2024/04/08/databricks-new-open-source-llm/